Tokens & Costs

Language models do not process text character by character. They break text into tokens — chunks that average roughly 4 characters in English. Understanding tokens helps you predict costs, optimize context usage, and choose the right model for each task.

What Tokens Are

Section titled “What Tokens Are”A token is the smallest unit a language model reads and generates. Some examples:

"hello"→ 1 token"Hello, world!"→ 4 tokens- A 1,000-word English document → approximately 1,300 tokens

- A block of Python code → typically more tokens than equivalent English prose (punctuation and indentation each consume tokens)

The exact tokenization varies by model family. GPT-4o, Claude, and Llama each use different tokenizers. QARK handles the differences — you see consistent token counts regardless of provider.

Three Types of Tokens

Section titled “Three Types of Tokens”Every API call involves up to three token categories, each priced independently:

Input Tokens

Section titled “Input Tokens”Everything you send to the model: the system prompt, conversation history, tool definitions, retrieved RAG context, and your latest message. Input tokens are the largest controllable cost factor.

Output Tokens

Section titled “Output Tokens”Everything the model generates in response: the assistant message, tool call arguments, and structured output. Output tokens are typically priced 2–5x higher than input tokens per unit.

Thinking Tokens

Section titled “Thinking Tokens”When chain-of-thought thinking is enabled, some models (notably Anthropic’s Claude with extended thinking) produce internal reasoning tokens. These appear in collapsible thinking blocks in the conversation but are billed separately. Not all models charge for thinking tokens — check the model registry for pricing details.

The Cost Formula

Section titled “The Cost Formula”Every message cost follows this calculation:

cost = (input_tokens × input_price_per_token) + (output_tokens × output_price_per_token) + (thinking_tokens × thinking_price_per_token)Prices come from the model registry, which QARK keeps current. For example, with a model priced at $3.00 per million input tokens and $15.00 per million output tokens:

- Sending 2,000 input tokens and receiving 500 output tokens costs:

(2000 × $0.000003) + (500 × $0.000015) = $0.006 + $0.0075 = $0.0135

Context Window vs Max Output Tokens

Section titled “Context Window vs Max Output Tokens”Two limits define a model’s capacity — the model registry displays both:

- Context window — The total number of tokens the model can process in a single request (input + output combined). Ranges from 4,096 tokens on smaller models to 200,000+ on frontier models.

- Max output tokens — The maximum number of tokens the model can generate in a single response. Always smaller than the context window. Typically 4,096–16,384 tokens, though some models support up to 64,000+.

If your input consumes most of the context window, the model has fewer tokens available for its response. QARK’s context strategies help you manage this tradeoff.

Where to Monitor Costs

Section titled “Where to Monitor Costs”QARK surfaces cost data at three levels of granularity:

Per-Message Badge

Section titled “Per-Message Badge”

Every assistant message displays a badge showing:

- Input token count

- Output token count

- Thinking token count (when applicable)

- Calculated cost in USD

Click the badge to see the full breakdown including the model used and per-token pricing.

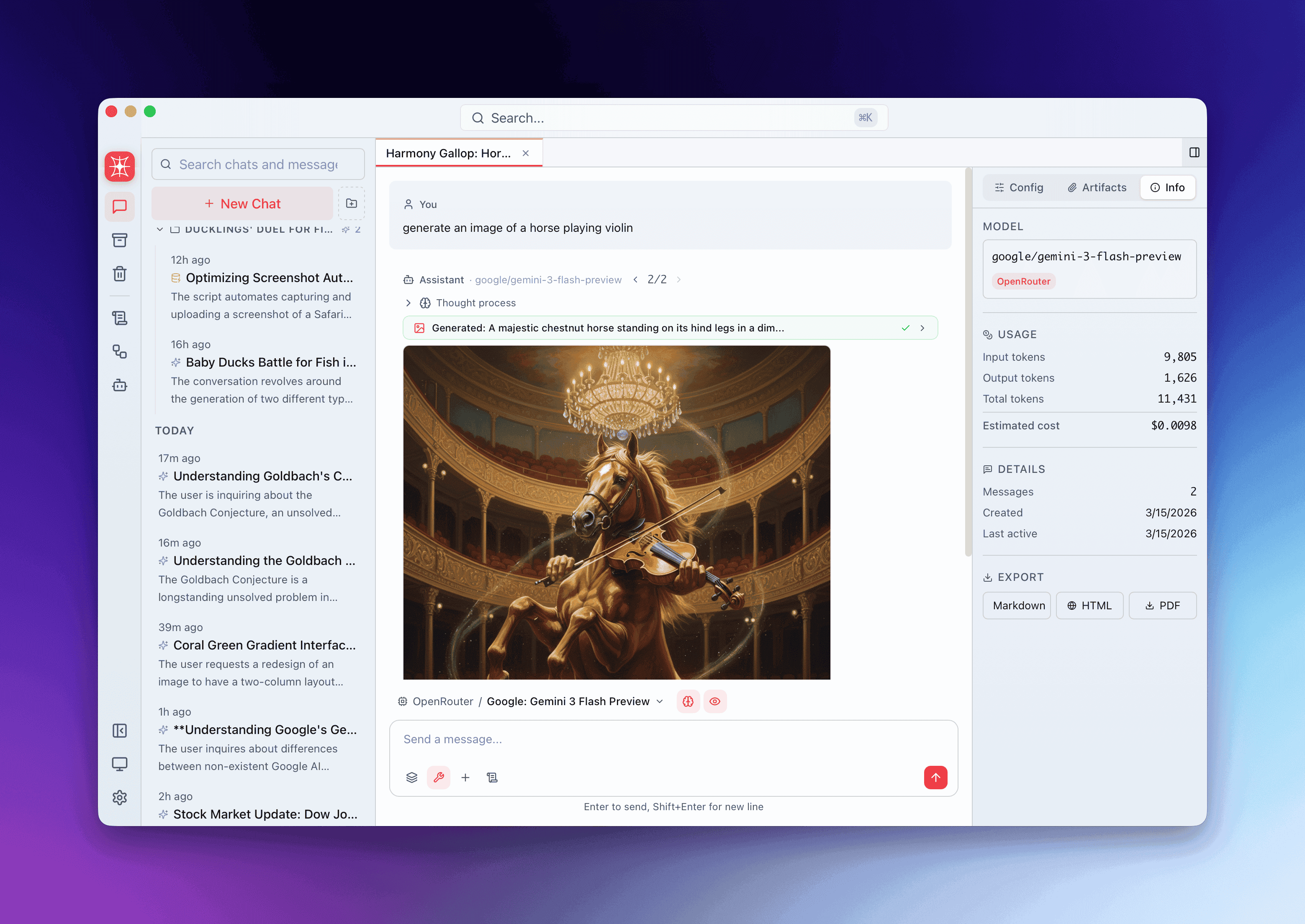

Conversation Info Tab

Section titled “Conversation Info Tab”Open the Info Panel for any conversation. The overview shows:

- Total tokens consumed (input + output + thinking, summed across all messages)

- Total cost for the entire conversation

- Number of API calls made

Budget Dashboard

Section titled “Budget Dashboard”The budget dashboard aggregates costs across all conversations, grouped by:

- Time period (daily, weekly, monthly)

- Provider

- Model

Set monthly budget limits to receive warnings when spending approaches your threshold.

Estimated Tokens During Streaming

Section titled “Estimated Tokens During Streaming”While a response streams in, QARK cannot know the final token count until the API returns usage data. During streaming, QARK estimates output tokens using:

estimated_tokens = content.length / 4This approximation (based on the ~4 characters per token average) updates in real time as content arrives. The badge switches to exact counts once the response completes and the API reports actual usage.

Cost Optimization Strategies

Section titled “Cost Optimization Strategies”Choose a Smaller Context Strategy

Section titled “Choose a Smaller Context Strategy”Context strategies that send fewer historical messages reduce input tokens. A sliding window of the last 10 messages costs significantly less than sending the full conversation history. The token_budget strategy lets you set an explicit cap on input tokens per request.

Use Cheaper Models for Iteration

Section titled “Use Cheaper Models for Iteration”Reserve frontier models for final outputs. During iteration — debugging, brainstorming, drafting — a model priced at $0.25 per million input tokens performs adequately for many tasks and costs 12x less than a $3.00/M model.

Run Local Models at Zero Cost

Section titled “Run Local Models at Zero Cost”Models served through Ollama or other local inference engines incur no per-token charges. QARK tracks token counts for local models (useful for context management) but reports $0.00 cost.

Leverage Model Speed and Intelligence Ratings

Section titled “Leverage Model Speed and Intelligence Ratings”The model registry includes speed and intelligence ratings for each model. Sort by these ratings to find the best cost-performance tradeoff. A model rated 8/10 intelligence at $1.00/M input tokens may outperform one rated 9/10 at $15.00/M for your specific use case.

Monitor Compaction Costs

Section titled “Monitor Compaction Costs”When QARK compacts a conversation (summarizing older messages to free context space), the compaction itself consumes tokens. These costs are tracked separately in the conversation’s cost breakdown so you can distinguish between productive work and housekeeping overhead.