Cloud Providers

QARK supports 9 cloud providers. Each connects through the same workflow: paste your API key in Settings → Providers, QARK validates it and fetches the model list. Every provider supports a custom base URL override for enterprise endpoints or proxies.

Anthropic

Anthropic

Section titled “ Anthropic”API key: console.anthropic.com → API Keys

Base URL: https://api.anthropic.com

Capabilities: Chat

| Model | Context | Output | Thinking | Vision | Tools |

|---|---|---|---|---|---|

| Claude Opus 4.6 | 200K | 128K | Adaptive | Yes | Yes |

| Claude Sonnet 4.6 | 200K | — | Adaptive | Yes | Yes |

| Claude Opus 4.5 | 200K | 64K | Yes | Yes | Yes |

| Claude Sonnet 4.5 | 200K | — | Yes | Yes | Yes |

| Claude Haiku 4.5 | 200K | — | Yes | Yes | Yes |

All Claude models support extended thinking — the model reasons step-by-step before responding. Opus 4.6 and Sonnet 4.6 support adaptive thinking mode, which lets the model decide when and how deeply to reason. Vision works with images, diagrams, screenshots, and documents.

OpenAI

OpenAI

Section titled “ OpenAI”API key: platform.openai.com → API Keys

Base URL: https://api.openai.com/v1

Capabilities: Chat, Embedding, Image Generation

| Model | Context | Output | Thinking | Vision | Tools |

|---|---|---|---|---|---|

| GPT-5.4 | 1.05M | 128K | Adaptive | Yes | Yes |

| GPT-5.4 Pro | 1.05M | 128K | Yes | Yes | Yes |

| GPT-5 Pro | 400K | 128K | Yes | Yes | Yes |

| GPT-5 | 400K | 128K | Yes | Yes | Yes |

| GPT-4.1 | 1.04M | 32K | No | Yes | Yes |

| GPT-4.1 Mini | 1.04M | 32K | No | Yes | Yes |

| GPT-4.1 Nano | 1.04M | 32K | No | Yes | Yes |

GPT-5.4 is the current flagship with a 1.05M token context window and adaptive thinking. The GPT-4.1 series offers million-token context without thinking — good for large document processing at lower cost.

Embedding models: text-embedding-3-large (3072 dimensions), text-embedding-3-small (1536 dimensions).

Image generation: gpt-image-1, gpt-image-1.5, gpt-image-1-mini. See Image Generation.

Custom base URL covers Azure OpenAI deployments and compatible endpoints.

Google Gemini

Google Gemini

Section titled “ Google Gemini”API key: aistudio.google.com → Get API Key

Base URL: https://generativelanguage.googleapis.com

Capabilities: Chat, Embedding, Image Generation, Video Generation

| Model | Context | Output | Thinking | Vision | Tools |

|---|---|---|---|---|---|

| Gemini 3.1 Flash | 1.04M | 65K | Yes | Yes | Yes |

| Gemini 3.1 Flash Lite | 1.04M | 65K | Yes | Yes | Yes |

| Gemini 3 Pro | 1.04M | — | Yes | Yes | Yes |

| Gemini 3 | 1.04M | — | Adaptive | Yes | Yes |

| Gemini 2.5 Pro | 1.04M | 65K | Yes | Yes | Yes |

| Gemini 2.5 Flash | 1.04M | 65K | Yes | Yes | Yes |

All Gemini models accept up to 1 million tokens of context — the largest window among cloud providers. The 3.x series is the current generation. Gemini 3 supports adaptive thinking mode.

Embedding models: gemini-embedding-001, gemini-embedding-2-preview (multimodal, free tier).

Image generation: Imagen 4 (standard, Ultra, Fast), Gemini Flash/Pro native image generation. See Image Generation.

Video generation: Veo 2, Veo 3, Veo 3.1 (standard and fast variants). See Video Generation.

Free tier available with rate limits. Paid tier unlocks higher throughput.

API key: console.groq.com → API Keys

Base URL: https://api.groq.com/openai/v1

Capabilities: Chat

| Model | Context | Thinking | Vision | Tools |

|---|---|---|---|---|

| Llama 3.3 70B | 128K | No | No | Yes |

| Llama 3.1 8B | 128K | No | No | Yes |

| Mixtral 8x7B | 32K | No | No | Yes |

| Gemma 2 9B | 8K | No | No | No |

Groq runs models on custom LPU hardware — expect hundreds of tokens per second. All models are open-source (Meta Llama, Mistral Mixtral, Google Gemma). Free tier available with rate limits.

Together AI

Together AI

Section titled “ Together AI”API key: api.together.xyz → Settings → API Keys

Base URL: https://api.together.xyz/v1

Capabilities: Chat, Embedding

| Model | Context | Thinking | Vision | Tools |

|---|---|---|---|---|

| Llama 4 Scout | 512K | No | Yes | Yes |

| Kimi K2 Instruct | 128K | No | No | Yes |

| Llama 3.3 70B Turbo | 128K | No | No | Yes |

| DeepSeek R1 | 64K | Yes | No | Yes |

| Qwen3 32B | 128K | No | No | Yes |

Hundreds of open-source models from Meta, DeepSeek, Qwen, Mistral, and others. Lower per-token cost than proprietary alternatives for comparable models.

Embedding models: BAAI/bge-large-en-v1.5, intfloat/multilingual-e5-large-instruct.

API key: console.x.ai → API Keys

Base URL: https://api.x.ai/v1

Capabilities: Chat, Image Generation, Video Generation

| Model | Context | Output | Thinking | Vision | Tools |

|---|---|---|---|---|---|

| Grok 4.1 Fast Reasoning | 2M | 128K | Always | Yes | Yes |

| Grok 4.1 Fast | 2M | 128K | No | Yes | Yes |

| Grok 4 | 256K | 128K | Always | Yes | Yes |

| Grok 4 Fast Reasoning | 2M | 128K | Always | Yes | Yes |

| Grok 4 Fast | 2M | 128K | No | Yes | Yes |

| Grok Code Fast | 256K | 128K | Always | No | Yes |

| Grok 3 | 128K | 128K | No | No | Yes |

| Grok 3 Mini | 128K | 128K | Yes | No | Yes |

Grok 4.1 Fast Reasoning is the current flagship — 2M token context, vision, reasoning, and tool use. The “Fast” variants without reasoning skip the thinking step for faster responses. Grok Code Fast is optimized for code tasks. Grok 3 Mini supports configurable reasoning effort (low/medium/high).

Image generation: Grok Imagine Image, Grok Imagine Image Pro. See Image Generation.

Video generation: Grok Imagine Video. See Video Generation.

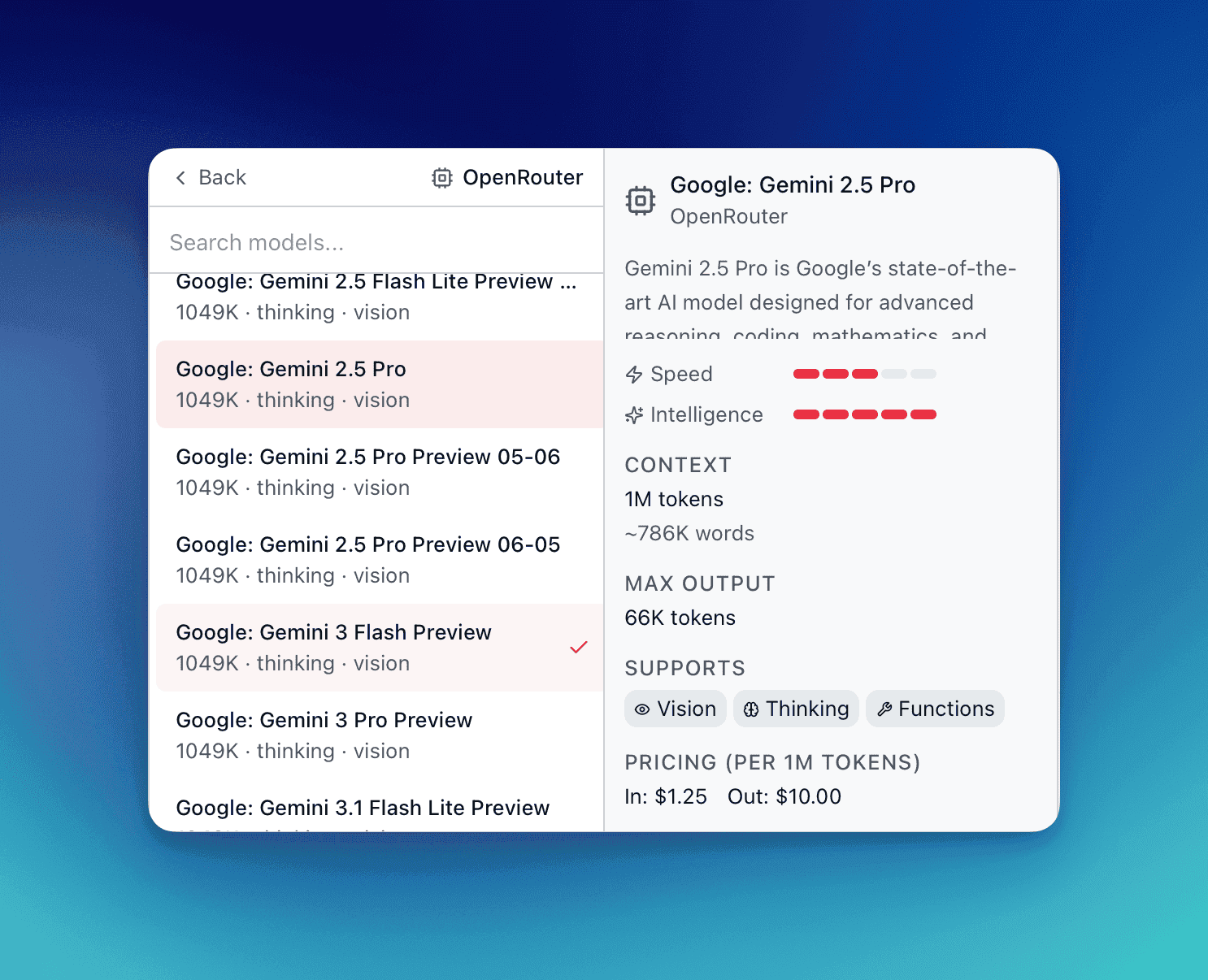

OpenRouter

OpenRouter

Section titled “ OpenRouter”API key: openrouter.ai → Keys

Base URL: https://openrouter.ai/api/v1

Capabilities: Chat, Embedding, Image Generation

OpenRouter aggregates models from dozens of providers through a single API key. Access Claude, GPT, Gemini, Llama, DeepSeek, Qwen, Mistral, and many more without signing up for each provider individually.

| Model (example) | Source | Thinking | Vision | Tools |

|---|---|---|---|---|

| Claude Sonnet 4.6 | Anthropic | Adaptive | Yes | Yes |

| GPT-5.4 | OpenAI | Adaptive | Yes | Yes |

| Gemini 3.1 Flash | Yes | Yes | Yes | |

| Llama 4 Scout | Meta | No | Yes | Yes |

Per-token pricing based on the underlying provider’s rate plus a margin. Integrated Exa search plugin for web search augmentation. Check openrouter.ai/models for current model list and pricing.

Embedding models: 20+ models including OpenAI, Mistral, Qwen, and Sentence Transformer variants.

Image generation: Gemini Flash/Pro image, FLUX.2 variants, Seedream, Riverflow. See Image Generation.

Perplexity

Perplexity

Section titled “ Perplexity”API key: perplexity.ai → API Settings

Base URL: https://api.perplexity.ai

Capabilities: Chat

| Model | Context | Key feature |

|---|---|---|

| Sonar Pro | 200K | Deep research with citations |

| Sonar | 128K | Fast search-augmented responses |

Sonar models search the web before responding — answers are grounded in current information with source URLs. No separate search tool setup needed.

DeepSeek

DeepSeek

Section titled “ DeepSeek”API key: platform.deepseek.com → API Keys

Base URL: https://api.deepseek.com

Capabilities: Chat

| Model | Context | Thinking | Vision | Tools |

|---|---|---|---|---|

| DeepSeek V3 | 64K | No | No | Yes |

| DeepSeek R1 | 64K | Yes | No | Yes |

DeepSeek R1 performs explicit chain-of-thought reasoning, showing its work step-by-step. Strong on coding, math, and logic tasks. Significantly lower per-token cost compared to proprietary models of similar capability.